Partner at Charbonnet Law Firm LLC

Practice Areas: Car Accident, Personal Injury

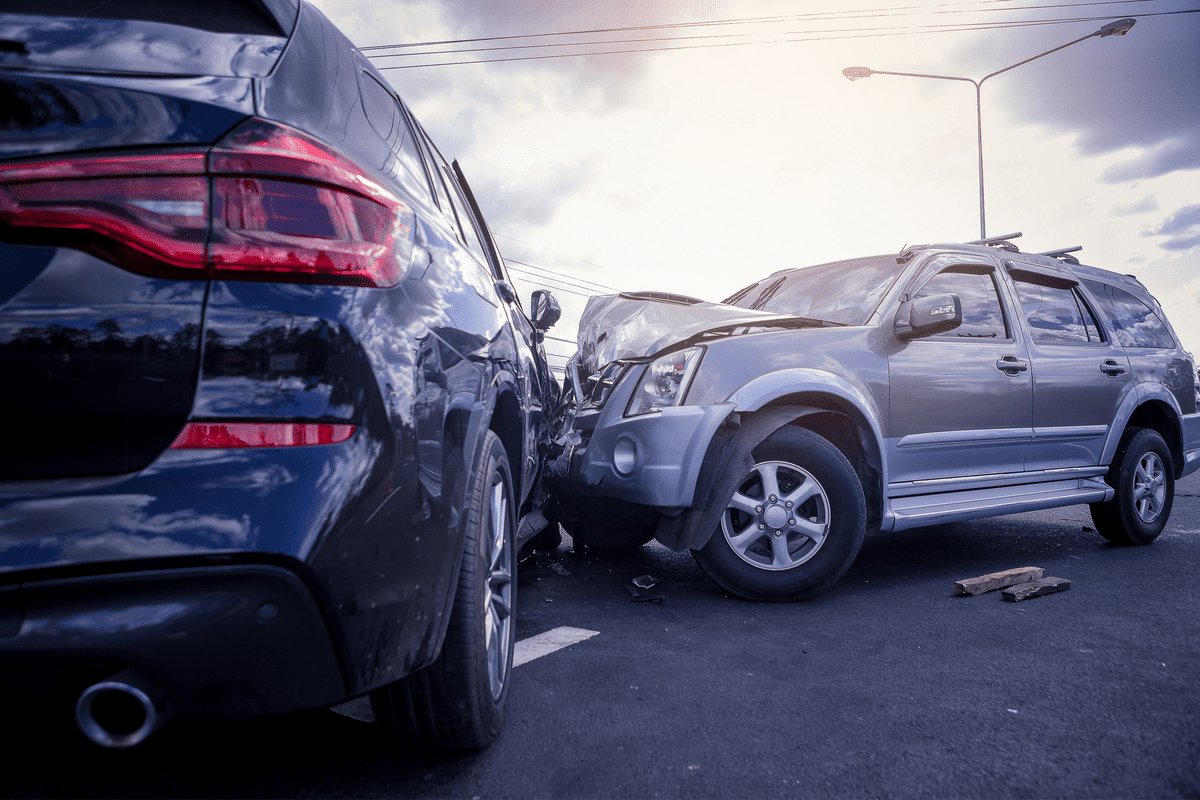

Self-driving cars are no longer just an idea from science fiction. Many of today’s vehicles already use some form of autonomous technology—from lane assistance to fully automated driving systems. But when these vehicles are involved in an accident in New Orleans, things get complicated fast. Who is responsible? The human behind the wheel? The car manufacturer? The software company? Or someone else entirely?

This article explores how fault is determined in accidents involving autonomous vehicle features, how Louisiana law fits in, and what you should do if you’re ever involved in one of these incidents.

Not all self-driving cars work the same way. The NHTSA defines five levels of automation:

Most vehicles today are at Level 2 or 3, meaning human supervision is still essential.

When an autonomous vehicle crashes, the question of fault becomes more layered than in traditional accidents. Here are some possible responsible parties.

If a driver was supposed to be supervising the vehicle and failed to take control when needed, they may be considered at fault. Distraction, misuse of the system, or overreliance on automation can lead to human liability.

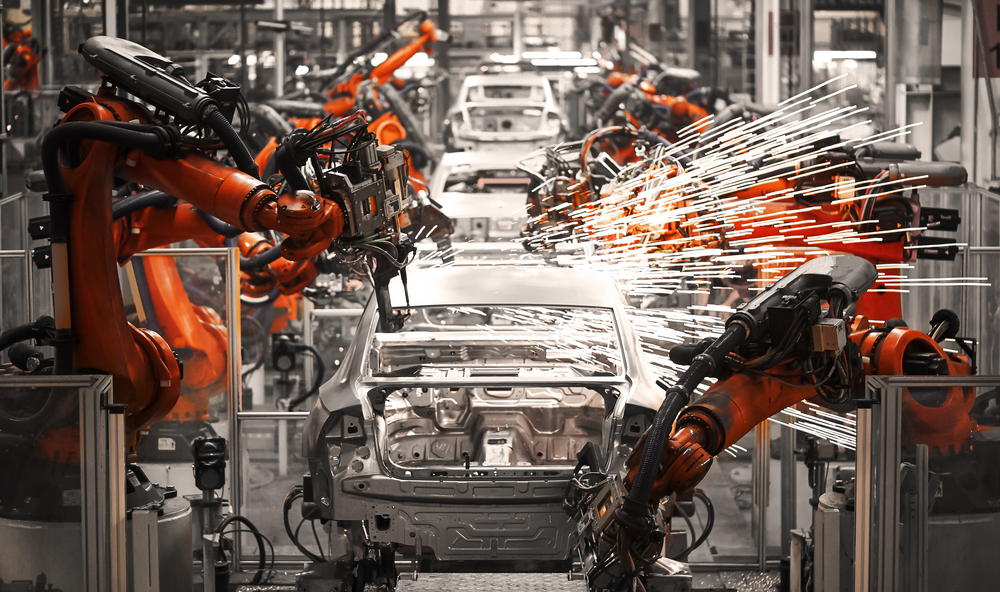

If a crash occurred due to a flaw in the vehicle’s design or a faulty sensor, the manufacturer may be held responsible. This includes issues with brakes, cameras, or onboard computers.

Some vehicles rely on third-party companies to develop their autonomous systems. If a software glitch leads to an accident, the software developer might share the blame.

Poor maintenance, missed updates, or failure to inspect critical systems can also lead to accidents. If a company or service center fails to update software or repair faulty hardware, they could be liable.

Sometimes, another driver, a pedestrian, or even a poorly maintained road could be part of the problem. In those cases, the fault might be shared.

In Louisiana, fault in an accident can be shared under the comparative fault system. If both a driver and the vehicle’s software contributed to a crash, each may be held partially liable, and compensation is adjusted based on their share of the blame.

The state also passed Senate Bill 147, which sets safety rules for driverless vehicles. If a system failure causes harm, the responsibility falls on the company behind the technology—not the car itself

In 2018, Elaine Herzberg became the first pedestrian killed by a self-driving vehicle. The Uber test car, operating in autonomous mode, struck her while she was walking her bike across the street. The safety driver inside the car, Rafaela Vasquez, was later charged with negligent homicide. Investigations revealed both human distraction and software failures played a role.

Walter Huang, a Tesla driver, died in a 2018 crash while using Autopilot. His vehicle hit a barrier on a California freeway. The family sued Tesla, claiming the software failed to recognize road hazards. The case highlighted issues with how Autopilot was marketed versus how it actually functioned.

In over 3.8 million miles driven without a human being behind the steering wheel in rider-only mode, the Waymo Driver incurred zero bodily injury claims.

|

Responsible Party |

Potential Causes of Liability |

Examples |

| Human Driver | Failure to intervene, misuse of AV features | Distracted driving during AV operation |

| Vehicle Manufacturer | Design defects, inadequate safety features | Faulty sensors leading to collision |

| Software Developer | Programming errors, system malfunctions | Navigation system directing vehicle into hazardous situations |

| Maintenance Provider | Negligent maintenance, failure to update software | Outdated software causing system failure |

| Third Parties | Actions of other drivers, pedestrians, or entities | Another driver running a red light causing AV to crash |

After any vehicle crash, safety comes first. Check for injuries and call 911. Next, take as many photos as possible of the scene, including damage to both vehicles and any relevant street signs or signals. If the car was in autonomous mode, note that.

Collect contact details from witnesses and other parties involved. Ask if the vehicle provides a crash report or if there is onboard data you can request. Lastly, speak with a lawyer who understands how AV systems and liability work. They can help you gather evidence, build a case, and pursue compensation if needed.

Depending on what caused the crash, the driver, carmaker, software company, or others may be responsible. Each case must be investigated based on its facts.

Yes, if the pedestrian’s actions contributed to the accident, they may be held partially at fault under comparative negligence laws

Each party involved is assigned a percentage of blame. Your compensation will be reduced if you’re found partially at fault.

Yes, new state legislation addresses safety requirements for driverless vehicles, including rules for operation and responsibility in crashes.

Call emergency services, document everything, gather witness details, and talk to a lawyer experienced in AV claims.

As cars become smarter, the laws around them must evolve, too. When an autonomous vehicle feature contributes to a crash, determining who’s at fault takes careful investigation. It might be a combination of human error, faulty programming, or poor maintenance. Louisiana’s legal system accounts for shared responsibility, but building a strong case still takes legal experience and deep understanding

If you’ve been involved in an accident involving a self-driving feature, it’s essential to know your rights. At Charbonnet Law Firm, LLC, we understand these new challenges and can help you figure out the next steps with clarity and care.

With over 50 years of legal experience serving families in the New Orleans area and surrounding Louisiana communities, our firm takes pride in providing clients with personalized legal services tailored to individual needs.